Merge pull request #925 from Mjrovai/xiaoml-kit

Xiaoml kit - Img Class Lab - Deploy with Edge Impulse

@@ -344,22 +344,27 @@ And, so, we have as a training result:

|

||||

|

||||

The model profile predicts **233 KB of RAM and 546 KB of Flash**, indicating no problem with the Xiao ESP32S3, which has 8 MB of PSRAM. Additionally, the Studio indicates a **latency of around 1160 ms**, which is very high. However, this is to be expected, given that we are using the ESP-EYE, whose CPU is an Extensa LX6, and the ESP32S3 uses a newer and more powerful Xtensa LX7.

|

||||

|

||||

> With the test data, we also achieved 100% accuracy, even with a quantized INT8 model. This result is not typical in real projects, but our project here is relatively simple, with 2 objects that are very distinctive from each other.

|

||||

> With the test data, we also achieved 100% accuracy, even with a quantized INT8 model. This result is not typical in real projects, but our project here is relatively simple, with two objects that are very distinctive from each other.

|

||||

|

||||

### Model Deployment

|

||||

## Model Deployment

|

||||

|

||||

WE can deploy the model as an Arduino Library or get the **trained model to test it on the SenseCraft AI**. Let's go with this second option.

|

||||

We can deploy the trained model:

|

||||

|

||||

- As `.TFLITE` to be used on the **SenseCraft AI **

|

||||

- As an `Arduino Library` in the **Edge Impulse Studio**.

|

||||

|

||||

Let's start with the SenseCraft, which is more straightforward and more intuitive.

|

||||

|

||||

### Model Deployment on the SenseCraft AI

|

||||

|

||||

On the **Dashboard**, it is possible to download the trained model in several different formats. Let's download `TensorFlow Lite (int8 quantized)`, which has a size of 623KB.

|

||||

|

||||

\noindent

|

||||

{width=85% fig-align="center"}

|

||||

|

||||

### Deploy the model on the SenseCraft AI Studio

|

||||

|

||||

On **SenseCraft AI Studio**, go to the `Workspace` tab, select `XIAO ESP32S3`, the corresponding Port, and connect the device.

|

||||

|

||||

You should see the last model that was uploaded to the device. Select the green button `[Upload Model]`. A pop-up window will ask for the **model name**, the **model file,** and to enter the class names (**objects**). We should use labels following alphabetical order: `0: background`, `1: box`, and `2: wheel`, and then press `[Send]`.

|

||||

You should see the last model that was uploaded to the device. Select the green button `[Upload Model]`. A pop-up window will prompt you to enter the model name, the model file, and the class names (**objects**). We should use labels in alphabetical order: `0: background`, `1: box`, and `2: wheel`, and then press `[Send]`.

|

||||

|

||||

\noindent

|

||||

{width=85% fig-align="center"}

|

||||

@@ -373,7 +378,7 @@ On the **Device Logger**, we can view the Serial Monitor, where we can observe t

|

||||

\noindent

|

||||

{width=85% fig-align="center"}

|

||||

|

||||

## Post-Processing

|

||||

#### Post-Processing

|

||||

|

||||

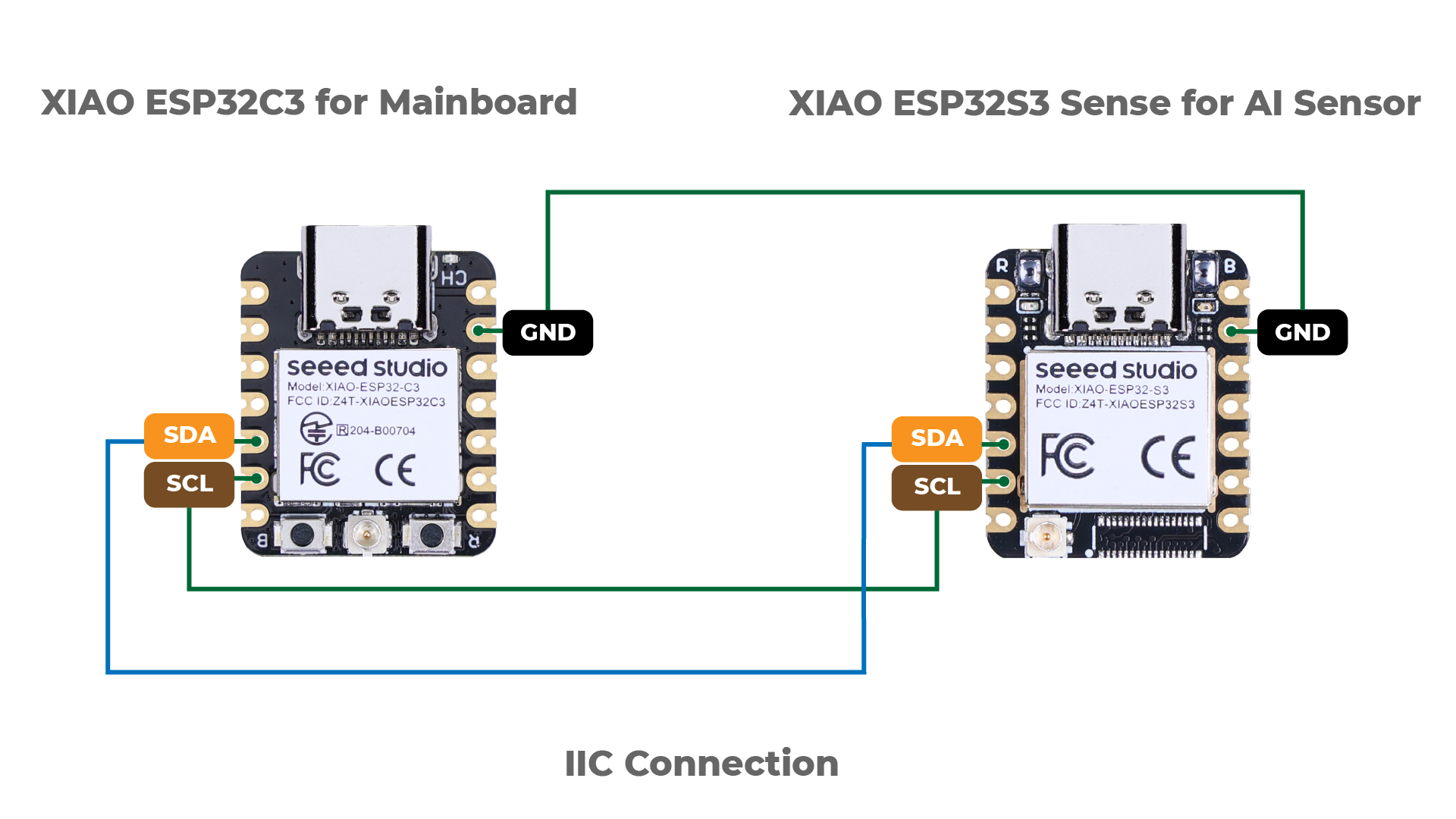

It is possible to obtain the output of a model inference, including Latency, Class ID, and Confidence, as shown on the Device Logger in SenseCraft AI. This allows us to utilize the **XIAO ESP32S3 Sense as an AI sensor**. In other words, we can retrieve the model data using different communication protocols such as MQTT, UART, I2C, or SPI, depending on our project requirements.

|

||||

|

||||

@@ -383,7 +388,229 @@ Below is an example of a connection using the I2C bus.

|

||||

|

||||

{width=75% fig-align="center"}

|

||||

|

||||

Please refer to [Seeed Studio Wiki](https://wiki.seeedstudio.com/sensecraft-ai/tutorials/sensecraft-ai-output-libraries-xiao/) for more information.

|

||||

Please refer to the [Seeed Studio Wiki](https://wiki.seeedstudio.com/sensecraft-ai/tutorials/sensecraft-ai-output-libraries-xiao/) for more information.

|

||||

|

||||

### Model Deployment as an Arduino Library at EI Studio

|

||||

|

||||

On the **Deploy** section at Edge Impulse Studio, Select `Arduino library`, `TensorFlow Lite`, `Quantized(int8)`, and press `[Build]`. The trained model will be downloaded as a .zip Arduino library:

|

||||

|

||||

\noindent

|

||||

{width=80% fig-align="center"}

|

||||

|

||||

Open your Arduino IDE, and under **Sketch,** go to **Include Library** and **add .ZIP Library.** Next, select the file downloaded from Edge Impulse Studio and press `[Open]`.

|

||||

|

||||

\noindent

|

||||

{width=80% fig-align="center"}

|

||||

|

||||

Go to the Arduino IDE `Examples` and look for the project by its name (in this case: "Box_versus_Whell_...Interfering". Open `esp32` -> `esp32_camera`. The sketch `esp32_camera.ino` will be downloaded to the IDE.

|

||||

|

||||

This sketch was developed for the standard ESP32 and will not work with the XIAO ESP32S3 Sense. It should be modified. Let's download the modified one from the project GitHub: [Image_class_XIAOML-Kit.ino](https://github.com/Mjrovai/XIAO-ESP32S3-Sense/blob/main/XIAOML_Kit_code/image_class_XIAOML-Kit/image_class_XIAOML-Kit.ino).

|

||||

|

||||

#### XIAO ESP32S3 Image Classification Code Explained

|

||||

|

||||

The code captures images from the onboard camera, processes them, and classifies them (in this case, "Box", "Wheel", or "Background") using the trained model on EI Studio. It runs continuously, performing real-time inference on the edge device.

|

||||

|

||||

In short,:

|

||||

|

||||

Camera → JPEG Image → RGB888 Conversion → Resize to 96x96 →

|

||||

Neural Network → Classification Results → Serial Output

|

||||

|

||||

##### Key Components

|

||||

|

||||

1. **Library Includes and Dependencies**

|

||||

|

||||

```cpp

|

||||

#include <Box_versus_Wheel_-_XIAO_ESP32S3_inferencing.h>

|

||||

#include "edge-impulse-sdk/dsp/image/image.hpp"

|

||||

#include "esp_camera.h"

|

||||

```

|

||||

|

||||

- **Edge Impulse Inference Library**: Contains our trained model and inference engine

|

||||

- **Image Processing**: Provides functions for image manipulation

|

||||

- **ESP Camera**: Hardware interface for the camera module

|

||||

|

||||

2. **Camera Pin Configurations**

|

||||

|

||||

The XIAO ESP32S3 Sense can work with different camera sensors (OV2640 or OV3660), which may have different pin configurations. The code defines three possible configurations:

|

||||

|

||||

```cpp

|

||||

// Configuration 1: Most common OV2640 configuration

|

||||

#define CONFIG_1_XCLK_GPIO_NUM 10

|

||||

#define CONFIG_1_SIOD_GPIO_NUM 40

|

||||

#define CONFIG_1_SIOC_GPIO_NUM 39

|

||||

// ... more pins

|

||||

```

|

||||

|

||||

This flexibility allows the code to automatically try different pin mappings if the first one doesn't work, making it more robust across different hardware revisions.

|

||||

|

||||

3. **Memory Management Settings**

|

||||

|

||||

```cpp

|

||||

#define EI_CAMERA_RAW_FRAME_BUFFER_COLS 320

|

||||

#define EI_CAMERA_RAW_FRAME_BUFFER_ROWS 240

|

||||

#define EI_CLASSIFIER_ALLOCATION_HEAP 1

|

||||

```

|

||||

|

||||

- **Frame Buffer Size**: Defines the raw image size (320x240 pixels)

|

||||

- **Heap Allocation**: Uses dynamic memory allocation for flexibility

|

||||

- **PSRAM Support**: The ESP32S3 has 8MB of PSRAM for storing large data like images

|

||||

|

||||

##### `setup()` - Initialization

|

||||

|

||||

```cpp

|

||||

void setup() {

|

||||

Serial.begin(115200);

|

||||

while (!Serial);

|

||||

|

||||

if (ei_camera_init() == false) {

|

||||

ei_printf("Failed to initialize Camera!\r\n");

|

||||

} else {

|

||||

ei_printf("Camera initialized\r\n");

|

||||

}

|

||||

|

||||

ei_sleep(2000); // Wait 2 seconds before starting

|

||||

}

|

||||

```

|

||||

|

||||

This function:

|

||||

|

||||

1. Initializes serial communication for debugging output

|

||||

2. Initializes the camera with automatic configuration detection

|

||||

3. Waits 2 seconds before starting continuous inference

|

||||

|

||||

##### `loop()` - Main Processing Loop

|

||||

|

||||

The loop performs these steps continuously:

|

||||

|

||||

**Step 1: Memory Allocation**

|

||||

|

||||

```cpp

|

||||

snapshot_buf = (uint8_t*)ps_malloc(EI_CAMERA_RAW_FRAME_BUFFER_COLS *

|

||||

EI_CAMERA_RAW_FRAME_BUFFER_ROWS *

|

||||

EI_CAMERA_FRAME_BYTE_SIZE);

|

||||

```

|

||||

|

||||

Allocates memory for the image buffer, preferring PSRAM (faster external RAM) but falling back to regular heap if needed.

|

||||

|

||||

**Step 2: Image Capture**

|

||||

|

||||

```cpp

|

||||

if (ei_camera_capture((size_t)EI_CLASSIFIER_INPUT_WIDTH,

|

||||

(size_t)EI_CLASSIFIER_INPUT_HEIGHT,

|

||||

snapshot_buf) == false) {

|

||||

ei_printf("Failed to capture image\r\n");

|

||||

free(snapshot_buf);

|

||||

return;

|

||||

}

|

||||

```

|

||||

|

||||

Captures an image from the camera and stores it in the buffer.

|

||||

|

||||

**Step 3: Run Inference**

|

||||

|

||||

```cpp

|

||||

ei_impulse_result_t result = { 0 };

|

||||

EI_IMPULSE_ERROR err = run_classifier(&signal, &result, false);

|

||||

```

|

||||

|

||||

Runs the machine learning model on the captured image.

|

||||

|

||||

**Step 4: Output Results**

|

||||

|

||||

```cpp

|

||||

for (uint16_t i = 0; i < EI_CLASSIFIER_LABEL_COUNT; i++) {

|

||||

ei_printf(" %s: %.5f\r\n",

|

||||

ei_classifier_inferencing_categories[i],

|

||||

result.classification[i].value);

|

||||

}

|

||||

```

|

||||

|

||||

Prints the classification results showing confidence scores for each category.

|

||||

|

||||

##### `ei_camera_init()` - Smart Camera Initialization

|

||||

|

||||

This function implements an intelligent initialization sequence:

|

||||

|

||||

```cpp

|

||||

bool ei_camera_init(void) {

|

||||

// Try Configuration 1 (OV2640 common)

|

||||

update_camera_config(1);

|

||||

esp_err_t err = esp_camera_init(&camera_config);

|

||||

if (err == ESP_OK) goto camera_init_success;

|

||||

|

||||

// Try Configuration 2 (OV3660)

|

||||

esp_camera_deinit();

|

||||

update_camera_config(2);

|

||||

err = esp_camera_init(&camera_config);

|

||||

if (err == ESP_OK) goto camera_init_success;

|

||||

|

||||

// Continue trying other configurations...

|

||||

}

|

||||

```

|

||||

|

||||

The function:

|

||||

|

||||

1. Tries multiple pin configurations

|

||||

2. Tests different clock frequencies (10MHz or 16MHz)

|

||||

3. Attempts PSRAM first, then falls back to DRAM

|

||||

4. Applies sensor-specific settings based on detected hardware

|

||||

|

||||

##### `ei_camera_capture()` - Image Processing Pipeline

|

||||

|

||||

```cpp

|

||||

bool ei_camera_capture(uint32_t img_width, uint32_t img_height, uint8_t *out_buf) {

|

||||

// 1. Get frame from camera

|

||||

camera_fb_t *fb = esp_camera_fb_get();

|

||||

|

||||

// 2. Convert JPEG to RGB888 format

|

||||

bool converted = fmt2rgb888(fb->buf, fb->len, PIXFORMAT_JPEG, snapshot_buf);

|

||||

|

||||

// 3. Return frame buffer to camera driver

|

||||

esp_camera_fb_return(fb);

|

||||

|

||||

// 4. Resize if needed

|

||||

if (do_resize) {

|

||||

ei::image::processing::crop_and_interpolate_rgb888(...);

|

||||

}

|

||||

}

|

||||

```

|

||||

|

||||

This function:

|

||||

|

||||

1. Captures a JPEG image from the camera

|

||||

2. Converts it to RGB888 format (required by the ML model)

|

||||

3. Resizes the image to match the model's input size (96x96 pixels)

|

||||

|

||||

### Inference

|

||||

|

||||

- Upload the code to the XIAO ESP32S3 Sense.

|

||||

|

||||

> ⚠️ **Attention**

|

||||

>

|

||||

> - The Xiao ESP32S3 **MUST** have the PSRAM enabled. You can check it on the Arduino IDE upper menu: `Tools`--> `PSRAM:OPI PSRAM`

|

||||

> - The Arduino Library (`esp32 by Espressif Systems` should be **version 2.017**. Do not update it)

|

||||

|

||||

\noindent

|

||||

{width=90% fig-align="center"}

|

||||

|

||||

- Open the Serial Monitor

|

||||

- Point the camera at the objects, and check the result on the Serial Monitor.

|

||||

|

||||

\noindent

|

||||

{width=90% fig-align="center"}

|

||||

|

||||

### Post-Processing

|

||||

|

||||

In edge AI applications, the inference result is only as valuable as our ability to act upon it. While serial output provides detailed information for debugging and development, real-world deployments require immediate, human-readable feedback that doesn't depend on external monitors or connections.

|

||||

|

||||

The XIAOML Kit tiny 0.42" OLED display (72×40 pixels) serves as a crucial post-processing component that transforms raw ML inference results into immediate, human-readable feedback—displaying detected class names and confidence levels directly on the device, eliminating the need for external monitors and enabling truly standalone edge AI deployment in industrial, agricultural, or retail environments where instant visual confirmation of AI predictions is essential.

|

||||

|

||||

So, let's modify the sketch to automatically adapt to the model trained on Edge Impulse by reading the class names and count directly from the model. The display will show abbreviated class names (3 letters) with larger fonts for better visibility on the tiny 72x40 pixel display. Download the code from the GitHub: [XIAOML-Kit-Img_Class_OLED_Gen](https://github.com/Mjrovai/XIAO-ESP32S3-Sense/tree/main/XIAOML_Kit_code/XIAOML-Kit-Img_Class_OLED_Gen).

|

||||

|

||||

Running the code, we can see the result:

|

||||

|

||||

\noindent

|

||||

{width=90% fig-align="center"}

|

||||

|

||||

## Summary

|

||||

|

||||

@@ -408,3 +635,4 @@ This foundation in image classification prepares you for more complex computer v

|

||||

- [Edge Impulse Project](https://studio.edgeimpulse.com/public/757065/live)

|

||||

- [XIAO as an AI Sensor](https://wiki.seeedstudio.com/sensecraft-ai/tutorials/sensecraft-ai-output-libraries-xiao/)

|

||||

- [Seeed Arduino SSCMA Library](https://github.com/Seeed-Studio/Seeed_Arduino_SSCMA)

|

||||

- [XIAOML Kit Code](https://github.com/Mjrovai/XIAO-ESP32S3-Sense/tree/main/XIAOML_Kit_code)

|

||||

|

||||

|

After Width: | Height: | Size: 87 KiB |

|

After Width: | Height: | Size: 207 KiB |

|

After Width: | Height: | Size: 147 KiB |

|

After Width: | Height: | Size: 715 KiB |

|

After Width: | Height: | Size: 294 KiB |

|

After Width: | Height: | Size: 458 KiB |

|

After Width: | Height: | Size: 2.4 MiB |

@@ -1,13 +1,12 @@

|

||||

|

||||

# Keyword Spotting (KWS) {.unnumbered}

|

||||

|

||||

|

||||

|

||||

|

||||

## Overview {#sec-keyword-spotting-kws-overview-791a}

|

||||

## Overview

|

||||

|

||||

Keyword Spotting (KWS) is integral to many voice recognition systems, enabling devices to respond to specific words or phrases. While this technology underpins popular devices like Google Assistant or Amazon Alexa, it's equally applicable and achievable on smaller, low-power devices. This lab will guide you through implementing a KWS system using TinyML on the XIAO ESP32S3 microcontroller board.

|

||||

|

||||

The XIAO ESP32S3, equipped with Espressif's ESP32-S3 chip, is a compact and potent microcontroller offering a dual-core Xtensa LX7 processor, integrated Wi-Fi, and Bluetooth. Its balance of computational power, energy efficiency, and versatile connectivity make it a fantastic platform for TinyML applications. Also, with its expansion board, we will have access to the "sense" part of the device, which has a $1600\times 1200$ OV2640 camera, an SD card slot, and a **digital microphone**. The integrated microphone and the SD card will be essential in this project.

|

||||

The XIAO ESP32S3, equipped with Espressif's ESP32-S3 chip, is a compact and potent microcontroller offering a dual-core Xtensa LX7 processor, integrated Wi-Fi, and Bluetooth. Its balance of computational power, energy efficiency, and versatile connectivity makes it a fantastic platform for TinyML applications. Also, with its expansion board, we will have access to the "sense" part of the device, which has a camera, an SD card slot, and a **digital microphone**. The integrated microphone and the SD card will be essential in this project.

|

||||

|

||||

We will use the [Edge Impulse Studio](https://www.edgeimpulse.com/), a powerful, user-friendly platform that simplifies creating and deploying machine learning models onto edge devices. We'll train a KWS model step-by-step, optimizing and deploying it onto the XIAO ESP32S3 Sense.

|

||||

|

||||

@@ -17,7 +16,22 @@ Leveraging our experience with TensorFlow Lite for Microcontrollers (the engine

|

||||

|

||||

As we progress through the lab, we'll break down each process stage – from data collection and preparation to model training and deployment – to provide a comprehensive understanding of implementing a KWS system on a microcontroller.

|

||||

|

||||

### How does a voice assistant work? {#sec-keyword-spotting-kws-voice-assistant-work-6f0c}

|

||||

::: callout-tip

|

||||

|

||||

## Learning Objectives

|

||||

|

||||

- **Understand Voice Assistant Architecture** including cascaded detection systems and the role of edge-based keyword spotting as the first stage of voice processing pipelines

|

||||

- **Master Audio Data Collection Techniques** using both offline methods (XIAO ESP32S3 microphone with SD card storage) and online methods (smartphone integration with Edge Impulse Studio)

|

||||

- **Implement Digital Signal Processing for Audio** including I2S protocol fundamentals, audio sampling at 16kHz/16-bit, and conversion between time-domain audio signals and frequency-domain features using MFCC

|

||||

- **Train Convolutional Neural Networks for Audio Classification** using transfer learning techniques, data augmentation strategies, and model optimization for four-class classification (YES, NO, NOISE, UNKNOWN)

|

||||

- **Deploy Optimized Models on Microcontrollers** through quantization (INT8), memory management with PSRAM, and real-time inference optimization for embedded systems

|

||||

- **Develop Complete Post-Processing Pipelines** including confidence thresholding, GPIO control for external devices, OLED display integration, and creating standalone AI sensor systems

|

||||

- **Compare Development Workflows** between no-code platforms (Edge Impulse Studio) and traditional embedded programming (Arduino IDE) for TinyML applications

|

||||

:::

|

||||

|

||||

## The KWS Project

|

||||

|

||||

### How does a voice assistant work?

|

||||

|

||||

Keyword Spotting (KWS) is critical to many voice assistants, enabling devices to respond to specific words or phrases. To start, it is essential to realize that Voice Assistants on the market, like Google Home or Amazon Echo-Dot, only react to humans when they are “waked up" by particular keywords such as “ Hey Google" on the first one and “Alexa" on the second.

|

||||

|

||||

@@ -41,9 +55,9 @@ The video below shows an example where I emulate a Google Assistant on a Raspber

|

||||

|

||||

In this lab, we will focus on Stage 1 (KWS or Keyword Spotting), where we will use the XIAO ESP2S3 Sense, which has a digital microphone for spotting the keyword.

|

||||

|

||||

### The KWS Project {#sec-keyword-spotting-kws-kws-project-8096}

|

||||

### The Inference Pipeline

|

||||

|

||||

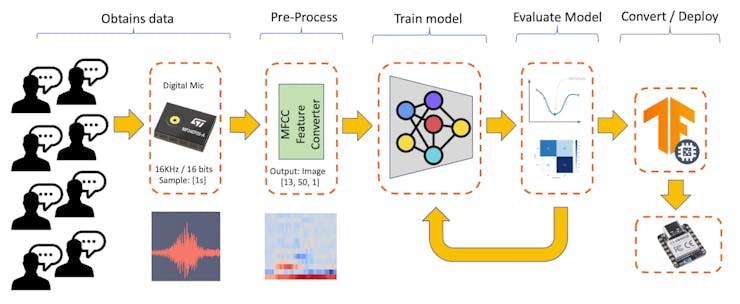

The below diagram will give an idea of how the final KWS application should work (during inference):

|

||||

The diagram below will give an idea of how the final KWS application should work (during inference):

|

||||

|

||||

\noindent

|

||||

|

||||

@@ -57,14 +71,14 @@ Our KWS application will recognize four classes of sound:

|

||||

|

||||

> Optionally for real-world projects, it is always advised to include different words than keywords, such as "Noise" (or Background) and "Unknown."

|

||||

|

||||

### The Machine Learning workflow {#sec-keyword-spotting-kws-machine-learning-workflow-f2f2}

|

||||

### The Machine Learning workflow

|

||||

|

||||

The main component of the KWS application is its model. So, we must train such a model with our specific keywords, noise, and other words (the "unknown"):

|

||||

|

||||

\noindent

|

||||

|

||||

|

||||

## Dataset {#sec-keyword-spotting-kws-dataset-a77c}

|

||||

## Dataset

|

||||

|

||||

The critical component of Machine Learning Workflow is the **dataset**. Once we have decided on specific keywords (*YES* and NO), we can take advantage of the dataset developed by Pete Warden, ["Speech Commands: A Dataset for Limited-Vocabulary Speech Recognition](https://arxiv.org/pdf/1804.03209.pdf)." This dataset has 35 keywords (with +1,000 samples each), such as yes, no, stop, and go. In other words, we can get 1,500 samples of *yes* and *no*.

|

||||

|

||||

@@ -73,7 +87,7 @@ You can download a small portion of the dataset from Edge Studio ([Keyword spott

|

||||

- Download the [keywords dataset.](https://cdn.edgeimpulse.com/datasets/keywords2.zip)

|

||||

- Unzip the file in a location of your choice.

|

||||

|

||||

Although we have a lot of data from Pete's dataset, collecting some words spoken by us is advised. When working with accelerometers, creating a dataset with data captured by the same type of sensor was essential. In the case of *sound*, it is different because what we will classify is, in reality, *audio* data.

|

||||

Although we have a lot of data from Pete's dataset, collecting some words spoken by us is advised. When working with accelerometers, creating a dataset with data captured by the same type of sensor was essential. In the case of *sound*, the classification differs because it involves, in reality, *audio* data.

|

||||

|

||||

> The key difference between sound and audio is their form of energy. Sound is mechanical wave energy (longitudinal sound waves) that propagate through a medium causing variations in pressure within the medium. Audio is made of electrical energy (analog or digital signals) that represent sound electrically.

|

||||

|

||||

@@ -90,7 +104,7 @@ In the lab Motion Classification and Anomaly Detection, we connect our device di

|

||||

|

||||

> If we want to collect audio data directly on the Studio, we can use any smartphone connected online with it. We will not explore this option here, but you can easily follow EI [documentation](https://docs.edgeimpulse.com/docs/development-platforms/using-your-mobile-phone).

|

||||

|

||||

### Capturing (offline) Audio Data with the XIAO ESP32S3 Sense {#sec-keyword-spotting-kws-capturing-offline-audio-data-xiao-esp32s3-sense-888c}

|

||||

### Capturing (offline) Audio Data with the XIAO ESP32S3 Sense

|

||||

|

||||

The built-in microphone is the [MSM261D3526H1CPM](https://files.seeedstudio.com/wiki/XIAO-BLE/mic-MSM261D3526H1CPM-ENG.pdf), a PDM digital output MEMS microphone with Multi-modes. Internally, it is connected to the ESP32S3 via an I2S bus using pins IO41 (Clock) and IO41 (Data).

|

||||

|

||||

@@ -114,9 +128,14 @@ The I2S protocol consists of at least three lines:

|

||||

|

||||

In an I2S data stream, the data is sent as a sequence of frames, each containing a left-channel word and a right-channel word. This makes I2S particularly suited for transmitting stereo audio data. However, it can also be used for mono or multichannel audio with additional data lines.

|

||||

|

||||

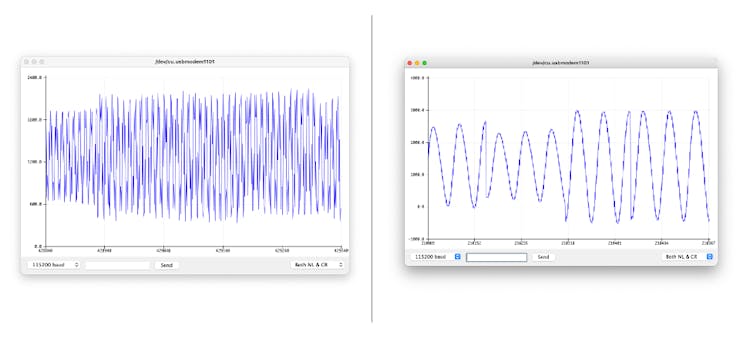

Let's start understanding how to capture raw data using the microphone. Go to the [GitHub project](https://github.com/Mjrovai/XIAO-ESP32S3-Sense)and download the sketch: [XIAOEsp2s3_Mic_Test](https://github.com/Mjrovai/XIAO-ESP32S3-Sense/tree/main/Mic_Test/XiaoEsp32s3_Mic_Test):

|

||||

Let's start understanding how to capture raw data using the microphone. Go to the [GitHub project](https://github.com/Mjrovai/XIAO-ESP32S3-Sense) and download the sketch: [XIAOEsp2s3_Mic_Test](https://github.com/Mjrovai/XIAO-ESP32S3-Sense/tree/main/Mic_Test/XiaoEsp32s3_Mic_Test):

|

||||

|

||||

```

|

||||

> ⚠️ **Attention**

|

||||

>

|

||||

> - The Xiao ESP32S3 **MUST** have the PSRAM enabled. You can check it on the Arduino IDE upper menu: `Tools`--> `PSRAM:OPI PSRAM`

|

||||

> - The Arduino Library (`esp32 by Espressif Systems` should be **version 2.017**. Please do not update it.

|

||||

|

||||

```cpp

|

||||

/*

|

||||

XIAO ESP32S3 Simple Mic Test

|

||||

*/

|

||||

@@ -162,7 +181,7 @@ Below is a test "whispering" in two different tones.

|

||||

\noindent

|

||||

|

||||

|

||||

### Save Recorded Sound Samples {#sec-keyword-spotting-kws-save-recorded-sound-samples-dataset-wav-audio-files-microsd-card-aad9}

|

||||

### Save Recorded Sound Samples

|

||||

|

||||

Let's use the onboard SD Card reader to save .wav audio files; we must habilitate the XIAO PSRAM first.

|

||||

|

||||

@@ -178,13 +197,13 @@ Turn the PSRAM function of the ESP-32 chip on (Arduino IDE): Tools>PSRAM: "OPI P

|

||||

\noindent

|

||||

{width=95% fig-align="center"}

|

||||

|

||||

- Download the sketch [Wav_Record_dataset](https://github.com/Mjrovai/XIAO-ESP32S3-Sense/tree/main/Wav_Record_dataset),[](https://github.com/Mjrovai/XIAO-ESP32S3-Sense/tree/main/Wav_Record_dataset) which you can find on the project's GitHub.

|

||||

- Download the sketch [Wav_Record_dataset](https://github.com/Mjrovai/XIAO-ESP32S3-Sense/tree/main/Wav_Record_dataset), which you can find on the project's GitHub.

|

||||

|

||||

This code records audio using the I2S interface of the Seeed XIAO ESP32S3 Sense board, saves the recording as a.wav file on an SD card, and allows for control of the recording process through commands sent from the serial monitor. The name of the audio file is customizable (it should be the class labels to be used with the training), and multiple recordings can be made, each saved in a new file. The code also includes functionality to increase the volume of the recordings.

|

||||

|

||||

Let's break down the most essential parts of it:

|

||||

|

||||

```

|

||||

```cpp

|

||||

#include <I2S.h>

|

||||

#include "FS.h"

|

||||

#include "SD.h"

|

||||

@@ -193,7 +212,7 @@ Let's break down the most essential parts of it:

|

||||

|

||||

Those are the necessary libraries for the program. I2S.h allows for audio input, FS.h provides file system handling capabilities, SD.h enables the program to interact with an SD card, and SPI.h handles the SPI communication with the SD card.

|

||||

|

||||

```

|

||||

```cpp

|

||||

#define RECORD_TIME 10

|

||||

#define SAMPLE_RATE 16000U

|

||||

#define SAMPLE_BITS 16

|

||||

@@ -208,7 +227,7 @@ Here, various constants are defined for the program.

|

||||

- **WAV_HEADER_SIZE** specifies the size of the .wav file header.

|

||||

- **VOLUME_GAIN** is used to increase the volume of the recording.

|

||||

|

||||

```

|

||||

```cpp

|

||||

int fileNumber = 1;

|

||||

String baseFileName;

|

||||

bool isRecording = false;

|

||||

@@ -216,7 +235,7 @@ bool isRecording = false;

|

||||

|

||||

These variables keep track of the current file number (to create unique file names), the base file name, and whether the system is currently recording.

|

||||

|

||||

```

|

||||

```cpp

|

||||

void setup() {

|

||||

Serial.begin(115200);

|

||||

while (!Serial);

|

||||

@@ -237,7 +256,7 @@ void setup() {

|

||||

|

||||

The setup function initializes the serial communication, I2S interface for audio input, and SD card interface. If the I2S did not initialize or the SD card fails to mount, it will print an error message and halt execution.

|

||||

|

||||

```

|

||||

```cpp

|

||||

void loop() {

|

||||

if (Serial.available() > 0) {

|

||||

String command = Serial.readStringUntil('\n');

|

||||

@@ -265,7 +284,7 @@ void loop() {

|

||||

|

||||

In the main loop, the program waits for a command from the serial monitor. If the command is rec, the program starts recording. Otherwise, the command is assumed to be the base name for the .wav files. If it's currently recording and a base file name is set, it records the audio and saves it as a.wav file. The file names are generated by appending the file number to the base file name.

|

||||

|

||||

```

|

||||

```cpp

|

||||

void record_wav(String fileName)

|

||||

{

|

||||

...

|

||||

@@ -286,7 +305,7 @@ void record_wav(String fileName)

|

||||

|

||||

This function records audio and saves it as a.wav file with the given name. It starts by initializing the sample_size and record_size variables. record_size is calculated based on the sample rate, size, and desired recording time. Let's dig into the essential sections;

|

||||

|

||||

```

|

||||

```cpp

|

||||

File file = SD.open(fileName.c_str(), FILE_WRITE);

|

||||

// Write the header to the WAV file

|

||||

uint8_t wav_header[WAV_HEADER_SIZE];

|

||||

@@ -296,7 +315,7 @@ file.write(wav_header, WAV_HEADER_SIZE);

|

||||

|

||||

This section of the code opens the file on the SD card for writing and then generates the .wav file header using the generate_wav_header function. It then writes the header to the file.

|

||||

|

||||

```

|

||||

```cpp

|

||||

// PSRAM malloc for recording

|

||||

rec_buffer = (uint8_t *)ps_malloc(record_size);

|

||||

if (rec_buffer == NULL) {

|

||||

@@ -309,7 +328,7 @@ Serial.printf("Buffer: %d bytes\n", ESP.getPsramSize()

|

||||

|

||||

The ps_malloc function allocates memory in the PSRAM for the recording. If the allocation fails (i.e., rec_buffer is NULL), it prints an error message and halts execution.

|

||||

|

||||

```

|

||||

```cpp

|

||||

// Start recording

|

||||

esp_i2s::i2s_read(esp_i2s::I2S_NUM_0,

|

||||

rec_buffer,

|

||||

@@ -325,7 +344,7 @@ if (sample_size == 0) {

|

||||

|

||||

The i2s_read function reads audio data from the microphone into rec_buffer. It prints an error message if no data is read (sample_size is 0).

|

||||

|

||||

```

|

||||

```cpp

|

||||

// Increase volume

|

||||

for (uint32_t i = 0; i < sample_size; i += SAMPLE_BITS/8) {

|

||||

(*(uint16_t *)(rec_buffer+i)) <<= VOLUME_GAIN;

|

||||

@@ -334,7 +353,7 @@ for (uint32_t i = 0; i < sample_size; i += SAMPLE_BITS/8) {

|

||||

|

||||

This section of the code increases the recording volume by shifting the sample values by VOLUME_GAIN.

|

||||

|

||||

```

|

||||

```cpp

|

||||

// Write data to the WAV file

|

||||

Serial.printf("Writing to the file ...\n");

|

||||

if (file.write(rec_buffer, record_size) != record_size)

|

||||

@@ -349,7 +368,7 @@ Serial.printf("Send rec for a new sample or enter

|

||||

|

||||

Finally, the audio data is written to the .wav file. If the write operation fails, it prints an error message. After writing, the memory allocated for rec_buffer is freed, and the file is closed. The function finishes by printing a completion message and prompting the user to send a new command.

|

||||

|

||||

```

|

||||

```cpp

|

||||

void generate_wav_header(uint8_t *wav_header,

|

||||

uint32_t wav_size,

|

||||

uint32_t sample_rate)

|

||||

@@ -385,18 +404,20 @@ Ultimately, we will get the saved files on the SD card.

|

||||

|

||||

The files are ready to be uploaded to Edge Impulse Studio

|

||||

|

||||

### Capturing (offline) Audio Data Apps {#sec-keyword-spotting-kws-capturing-offline-audio-data-apps-3930}

|

||||

### Capturing (offline) Audio Data Apps

|

||||

|

||||

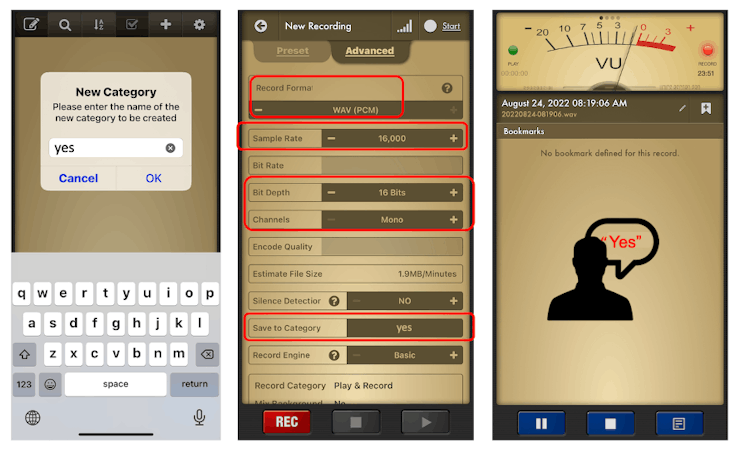

Alternatively, you can also use your PC or smartphone to capture audio data with a sampling frequency 16 kHz and a bit depth of 16 Bits. A good app for that is [*Voice Recorder Pro*](https://www.bejbej.ca/app/voicerecordpro) [(](https://www.bejbej.ca/app/voicerecordpro)IOS). You should save your records as .wav files and send them to your computer.

|

||||

There are many ways to capture audio data; the simplest one is to use a mobile phone or a PC as a **connected device** on the [Edge Impulse Studio](https://docs.edgeimpulse.com/docs/edge-ai-hardware/using-your-mobile-phone).

|

||||

|

||||

> The PC or smartphone should capture audio data with a sampling frequency of 16 kHz and a bit depth of 16 Bits.

|

||||

|

||||

Another alternative is to use dedicated apps. A good app for that is [*Voice Recorder Pro*](https://www.bejbej.ca/app/voicerecordpro) [(](https://www.bejbej.ca/app/voicerecordpro)IOS). You should save your records as .wav files and send them to your computer.

|

||||

|

||||

\noindent

|

||||

{width=90% fig-align="center"}

|

||||

|

||||

> Note that any app, such as [Audacity](https://www.audacityteam.org/), can be used for audio recording or even your computer[.](https://www.audacityteam.org/)

|

||||

## Training model with Edge Impulse Studio

|

||||

|

||||

## Training model with Edge Impulse Studio {#sec-keyword-spotting-kws-training-model-edge-impulse-studio-f6d3}

|

||||

|

||||

### Uploading the Data {#sec-keyword-spotting-kws-uploading-data-68ca}

|

||||

### Uploading the Data

|

||||

|

||||

When the raw dataset is defined and collected (Pete's dataset + recorded keywords), we should initiate a new project at Edge Impulse Studio:

|

||||

|

||||

@@ -438,7 +459,7 @@ Suppose we do not split data automatically in train/test during upload. In that

|

||||

|

||||

> We can optionally check all datasets using the tab Data Explorer.

|

||||

|

||||

### Creating Impulse (Pre-Process / Model definition) {#sec-keyword-spotting-kws-creating-impulse-preprocess-model-definition-dcba}

|

||||

### Creating Impulse (Pre-Process / Model definition)

|

||||

|

||||

*An* **impulse** *takes raw data, uses signal processing to extract features, and then uses a learning block to classify new data.*

|

||||

|

||||

@@ -454,7 +475,7 @@ Each 1-second audio sample should be pre-processed and converted to an image (fo

|

||||

|

||||

Next, we select KERAS for classification and build our model from scratch by doing Image Classification using Convolution Neural Network).

|

||||

|

||||

### Pre-Processing (MFCC) {#sec-keyword-spotting-kws-preprocessing-mfcc-76e1}

|

||||

### Pre-Processing (MFCC)

|

||||

|

||||

The next step is to create the images to be trained in the next phase:

|

||||

|

||||

@@ -474,7 +495,7 @@ For now, let's keep the parameters defined by the Autotuning tool. Save paramete

|

||||

|

||||

> If you want to go further with converting temporal serial data into images using FFT, Spectrogram, etc., you can play with this CoLab: [Audio Raw Data Analysis.](https://colab.research.google.com/github/Mjrovai/UNIFEI-IESTI01-TinyML-2022.1/blob/main/00_Curse_Folder/2_Applications_Deploy/Class_24/IESTI01_Audio_Raw_Data_Analisys.ipynb)

|

||||

|

||||

### Model Design and Training {#sec-keyword-spotting-kws-model-design-training-b346}

|

||||

### Model Design and Training

|

||||

|

||||

We will use a Convolution Neural Network (CNN) model. The basic architecture is defined with two blocks of Conv1D + MaxPooling (with 8 and 16 neurons, respectively) and a 0.25 Dropout. And on the last layer, after Flattening four neurons, one for each class:

|

||||

|

||||

@@ -493,7 +514,7 @@ If you want to understand what is happening "under the hood, " you can download

|

||||

|

||||

This CoLab Notebook can explain how you can go further: [KWS Classifier Project - Looking “Under the hood](https://colab.research.google.com/github/Mjrovai/XIAO-ESP32S3-Sense/blob/main/KWS) Training/xiao_esp32s3_keyword_spotting_project_nn_classifier.ipynb)."

|

||||

|

||||

## Testing {#sec-keyword-spotting-kws-testing-7687}

|

||||

## Testing

|

||||

|

||||

Testing the model with the data put apart before training (Test Data), we got an accuracy of approximately 87%.

|

||||

|

||||

@@ -517,9 +538,9 @@ Your phone will be connected to the Studio. Select the option Classification on

|

||||

\noindent

|

||||

|

||||

|

||||

## Deploy and Inference {#sec-keyword-spotting-kws-deploy-inference-44c6}

|

||||

## Deploy and Inference

|

||||

|

||||

The Studio will package all the needed libraries, preprocessing functions, and trained models, downloading them to your computer. You should select the option Arduino Library, and at the bottom, choose Quantized (Int8) and press the button Build.

|

||||

The Studio will package all the needed libraries, preprocessing functions, and trained models, downloading them to your computer. Select the Arduino Library option, then choose Quantized (Int8) from the bottom menu and press Build.

|

||||

|

||||

\noindent

|

||||

{width=90% fig-align="center"}

|

||||

@@ -540,7 +561,7 @@ Start changing the libraries to handle the I2S bus:

|

||||

|

||||

By:

|

||||

|

||||

```

|

||||

```cpp

|

||||

#include <I2S.h>

|

||||

#define SAMPLE_RATE 16000U

|

||||

#define SAMPLE_BITS 16

|

||||

@@ -548,7 +569,7 @@ By:

|

||||

|

||||

Initialize the IS2 microphone at setup(), including the lines:

|

||||

|

||||

```

|

||||

```cpp

|

||||

void setup()

|

||||

{

|

||||

...

|

||||

@@ -567,7 +588,7 @@ On the static void capture_samples(void* arg) function, replace the line 153 tha

|

||||

|

||||

By:

|

||||

|

||||

```

|

||||

```cpp

|

||||

/* read data at once from i2s */

|

||||

esp_i2s::i2s_read(esp_i2s::I2S_NUM_0,

|

||||

(void*)sampleBuffer,

|

||||

@@ -587,7 +608,7 @@ Finally, on static void microphone_inference_end(void) function, replace line 24

|

||||

|

||||

By:

|

||||

|

||||

```

|

||||

```cpp

|

||||

static void microphone_inference_end(void)

|

||||

{

|

||||

free(sampleBuffer);

|

||||

@@ -597,16 +618,27 @@ static void microphone_inference_end(void)

|

||||

|

||||

You can find the complete code on the [project's GitHub](https://github.com/Mjrovai/XIAO-ESP32S3-Sense/tree/main/xiao_esp32s3_microphone). Upload the sketch to your board and test some real inferences:

|

||||

|

||||

> ⚠️ **Attention**

|

||||

>

|

||||

> - The Xiao ESP32S3 **MUST** have the PSRAM enabled. You can check it on the Arduino IDE upper menu: `Tools`--> `PSRAM:OPI PSRAM`

|

||||

> - The Arduino Library (`esp32 by Espressif Systems` should be **version 2.017**. Please do not update it.

|

||||

|

||||

\noindent

|

||||

{width=80% fig-align="center"}

|

||||

|

||||

## Postprocessing {#sec-keyword-spotting-kws-postprocessing-583e}

|

||||

## Postprocessing

|

||||

|

||||

Now that we know the model is working by detecting our keywords, let's modify the code to see the internal LED going on every time a YES is detected.

|

||||

In edge AI applications, the inference result is only as valuable as our ability to act upon it. While serial output provides detailed information for debugging and development, real-world deployments require immediate, human-readable feedback that doesn't depend on external monitors or connections.

|

||||

|

||||

Let's explore two post-processing approaches. Using the internal XIAO's LED and the OLED on the XIAOML Kit.

|

||||

|

||||

### With LED

|

||||

|

||||

Now that we know the model is working by detecting our keywords, let's modify the code to see the internal LED go on every time a YES is detected.

|

||||

|

||||

You should initialize the LED:

|

||||

|

||||

```

|

||||

```cpp

|

||||

#define LED_BUILT_IN 21

|

||||

...

|

||||

void setup()

|

||||

@@ -620,7 +652,7 @@ void setup()

|

||||

|

||||

And change the // print the predictions portion of the previous code (on loop():

|

||||

|

||||

```

|

||||

```cpp

|

||||

int pred_index = 0; // Initialize pred_index

|

||||

float pred_value = 0; // Initialize pred_value

|

||||

|

||||

@@ -659,23 +691,65 @@ The idea is that the LED will be ON whenever the keyword YES is detected. In the

|

||||

|

||||

<iframe class="react-editor-embed react-editor-embed-override" src="https://www.youtube.com/embed/wjhtEzXt60Q" frameborder="0" style="box-sizing: border-box; align-self: center; flex: 1 1 0%; height: 363.068px; max-height: 100%; max-width: 100%; overflow: hidden; width: 645.455px; z-index: 1;"></iframe>

|

||||

|

||||

## Summary {#sec-keyword-spotting-kws-summary-133c}

|

||||

### With OLED Display

|

||||

|

||||

The Seeed XIAO ESP32S3 Sense is a *giant tiny device*! However, it is powerful, trustworthy, not expensive, low power, and has suitable sensors to be used on the most common embedded machine learning applications such as vision and sound. Even though Edge Impulse does not officially support XIAO ESP32S3 Sense (yet!), we realized that using the Studio for training and deployment is straightforward.

|

||||

The XIAOML Kit tiny 0.42" OLED display (72×40 pixels) serves as a crucial post-processing component that transforms raw ML inference results into immediate, human-readable feedback—displaying detected class names and confidence levels directly on the device, eliminating the need for external monitors and enabling truly standalone edge AI deployment in industrial, agricultural, or retail environments where instant visual confirmation of AI predictions is essential.

|

||||

|

||||

> On my [GitHub repository](https://github.com/Mjrovai/XIAO-ESP32S3-Sense), you will find the last version all the codeused on this project and the previous ones of the XIAO ESP32S3 series.

|

||||

So, let's modify the sketch to automatically adapt to the model trained on Edge Impulse by reading the class names and count directly from the model. Download the code from GitHub: [xiaoml-kit_kws_oled](https://github.com/Mjrovai/XIAO-ESP32S3-Sense/tree/main/XIAOML_Kit_code/xiaoml-kit_kws_oled).

|

||||

|

||||

Before we finish, consider that Sound Classification is more than just voice. For example, you can develop TinyML projects around sound in several areas, such as:

|

||||

Running the code, we can see the result:

|

||||

|

||||

- **Security** (Broken Glass detection)

|

||||

- **Industry** (Anomaly Detection)

|

||||

- **Medical** (Snore, Toss, Pulmonary diseases)

|

||||

- **Nature** (Beehive control, insect sound)

|

||||

\noindent

|

||||

{width=90% fig-align="center"}

|

||||

|

||||

## Resources {#sec-keyword-spotting-kws-resources-22bf}

|

||||

## Summary

|

||||

|

||||

This lab demonstrated the complete development cycle of a keyword spotting system using the XIAOML Kit, showcasing how modern TinyML platforms make sophisticated audio AI accessible on resource-constrained devices. Through hands-on implementation, we've bridged the gap between theoretical machine learning concepts and practical embedded AI deployment.

|

||||

|

||||

**Technical Achievements:**

|

||||

|

||||

The project successfully implemented a complete audio processing pipeline from raw sound capture through real-time inference. Using the XIAO ESP32S3's integrated digital microphone, we captured audio data at professional quality (16kHz/16-bit) and processed it using Mel Frequency Cepstral Coefficients (MFCC) for feature extraction. The deployed CNN model achieved excellent accuracy in distinguishing between our target keywords ("YES", "NO") and background conditions ("NOISE", "UNKNOWN"), with inference times suitable for real-time applications.

|

||||

|

||||

**Platform Integration:**

|

||||

|

||||

Edge Impulse Studio proved invaluable as a comprehensive MLOps platform for embedded systems, handling everything from data collection and labeling through model training, optimization, and deployment. The seamless integration between cloud-based training and edge deployment exemplifies modern TinyML workflows, while the Arduino IDE provided the flexibility needed for custom post-processing implementations.

|

||||

|

||||

**Real-World Applications:**

|

||||

|

||||

The techniques learned extend far beyond simple keyword detection. Voice-activated control systems, industrial safety monitoring through sound classification, medical applications for respiratory analysis, and environmental monitoring for wildlife or equipment sounds all leverage similar audio processing approaches. The cascaded detection architecture demonstrated here—using edge-based KWS to trigger more complex cloud processing—is fundamental to modern voice assistant systems.

|

||||

|

||||

**Embedded AI Principles:**

|

||||

|

||||

This project highlighted crucial TinyML considerations, including power management, memory optimization through PSRAM utilization, and the trade-offs between model complexity and inference speed. The successful deployment of a neural network performing real-time audio analysis on a microcontroller demonstrates how AI capabilities, once requiring powerful desktop computers, can now operate on battery-powered devices.

|

||||

|

||||

**Development Methodology:**

|

||||

|

||||

We explored multiple development pathways, from data collection strategies (offline SD card storage versus online streaming) to deployment options (Edge Impulse's automated library generation versus custom Arduino implementation). This flexibility is crucial for adapting to various project requirements and constraints.

|

||||

|

||||

**Future Directions:**

|

||||

|

||||

The foundation established here enables the exploration of more advanced audio AI applications. Multi-keyword recognition, speaker identification, emotion detection from voice, and environmental sound classification all build upon the same core techniques. The integration capabilities demonstrated with OLED displays and GPIO control illustrate how KWS can serve as the intelligent interface for broader IoT systems.

|

||||

|

||||

Consider that Sound Classification encompasses much more than just voice recognition. This project's techniques apply across numerous domains:

|

||||

|

||||

- **Security Applications**: Broken glass detection, intrusion monitoring, gunshot detection

|

||||

- **Industrial IoT**: Machinery health monitoring, anomaly detection in manufacturing equipment

|

||||

- **Healthcare**: Sleep disorder monitoring, respiratory condition assessment, elderly care systems

|

||||

- **Environmental Monitoring**: Wildlife tracking, urban noise analysis, smart building acoustic management

|

||||

- **Smart Home Integration**: Multi-room voice control, appliance status monitoring through sound signatures

|

||||

|

||||

**Key Takeaways:**

|

||||

|

||||

The XIAOML Kit proves that professional-grade AI development is achievable with accessible tools and modest budgets. The combination of capable hardware (ESP32S3 with PSRAM and integrated sensors), mature development platforms (Edge Impulse Studio), and comprehensive software libraries creates an environment where complex AI concepts become tangible, working systems.

|

||||

|

||||

This lab demonstrates that the future of AI isn't just in massive data centers, but in intelligent edge devices that can process, understand, and respond to their environment in real-time—opening possibilities for ubiquitous, privacy-preserving, and responsive artificial intelligence systems.

|

||||

|

||||

## Resources

|

||||

|

||||

- [XIAO ESP32S3 Codes](https://github.com/Mjrovai/XIAO-ESP32S3-Sense)

|

||||

|

||||

- [XIAOML Kit Code](https://github.com/Mjrovai/XIAO-ESP32S3-Sense/tree/main/XIAOML_Kit_code)

|

||||

|

||||

- [Subset of Google Speech Commands Dataset](https://cdn.edgeimpulse.com/datasets/keywords2.zip)

|

||||

|

||||

- [KWS MFCC Analysis Colab Notebook](https://colab.research.google.com/github/Mjrovai/Arduino_Nicla_Vision/blob/main/KWS/KWS_MFCC_Analysis.ipynb)

|

||||

|

||||