mirror of

https://github.com/harvard-edge/cs249r_book.git

synced 2026-05-05 09:09:13 -05:00

Created subfolders within images/ based on filetype

Better organization for the future to build a PDF etc. cause images need to be pulled from the right type for quality rendering. Currently, not being used but will be useful in the future and plus the organization now doesn't hurt by any means, only makes the "code" cleaner.

This commit is contained in:

@@ -32,7 +32,7 @@ Training models can consume a significant amount of energy, sometimes equivalent

|

||||

|

||||

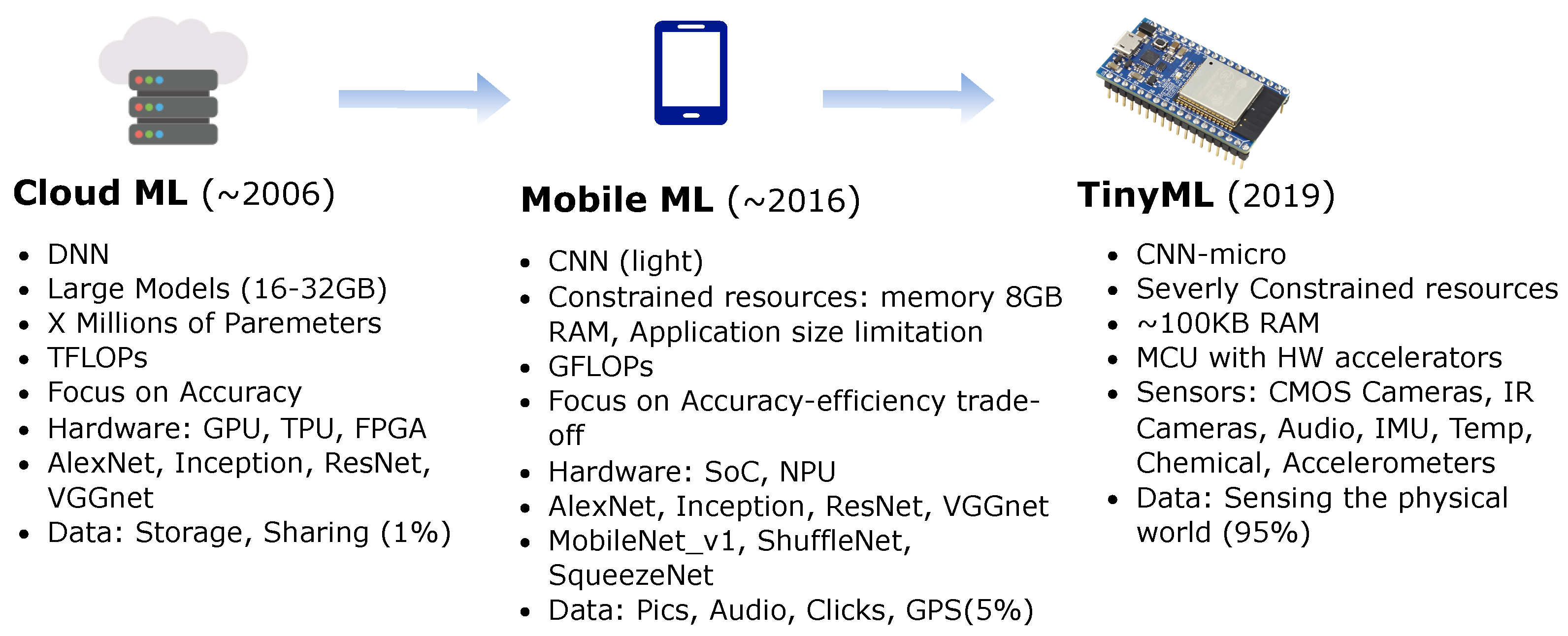

Efficiency takes on different connotations based on where AI computations occur. Let's take a brief moment to revisit and differentiate between Cloud, Edge, and TinyML in terms of efficiency.

|

||||

|

||||

{#fig-platforms}

|

||||

{#fig-platforms}

|

||||

|

||||

For cloud AI, traditional AI models often ran in the large—scale data centers equipped with powerful GPUs and TPUs [@barroso2019datacenter]. Here, efficiency pertains to optimizing computational resources, reducing costs, and ensuring timely data processing and return. However, relying on the cloud introduced latency, especially when dealing with large data streams that needed to be uploaded, processed, and then downloaded.

|

||||

|

||||

@@ -58,11 +58,11 @@ Model compression methods are very important for bringing deep learning models t

|

||||

|

||||

**Pruning**: This is akin to trimming the branches of a tree. This was first thought of in the [Optimal Brain Damage](https://proceedings.neurips.cc/paper/1989/file/6c9882bbac1c7093bd25041881277658-Paper.pdf) paper [@lecun1989optimal]. This was later popularized in the context of deep learning by @han2016deep. In pruning, certain weights or even entire neurons are removed from the network, based on specific criteria. This can significantly reduce the model size. There are various strategies, like weight pruning, neuron pruning, and structured pruning. We will explore these in more detail in @sec-pruning. In the example in @fig-pruning, removing some of the nodes in the inner layers reduces the numbers of edges between the nodes and, in turn, the size of the model.

|

||||

|

||||

{#fig-pruning}

|

||||

{#fig-pruning}

|

||||

|

||||

**Quantization**: Quantization is the process of constraining an input from a large set to output in a smaller set, primarily in deep learning, this means reducing the number of bits that represent the weights and biases of the model. For example, using 16-bit or 8-bit representations instead of 32-bit can reduce model size and speed up computations, with a minor trade-off in accuracy. We will explore these in more detail in @sec-quant. @fig-quantization shows an example of quantization by rounding to the closest number. The conversion from 32-bit floating point to 16-bit reduces the memory usage by 50%. And going from 32-bit to 8-bit integer, memory is reduced by 75%. While the loss in numeric precision, and consequently model performance, is minor, the memory usage efficiency is very significant.

|

||||

|

||||

{#fig-quantization}

|

||||

{#fig-quantization}

|

||||

|

||||

**Knowledge Distillation**: Knowledge distillation involves training a smaller model (student) to replicate the behavior of a larger model (teacher). The idea is to transfer the knowledge from the cumbersome model to the lightweight one, so the smaller model attains performance close to its larger counterpart but with significantly fewer parameters. We will explore knowledge distillation in more detail in the @sec-kd.

|

||||

|

||||

@@ -74,7 +74,7 @@ Model compression methods are very important for bringing deep learning models t

|

||||

|

||||

[Edge TPUs](https://cloud.google.com/edge-tpu) are a smaller, power-efficient version of Google's TPUs, tailored for edge devices. They provide fast on-device ML inferencing for TensorFlow Lite models. Edge TPUs allow for low-latency, high-efficiency inference on edge devices like smartphones, IoT devices, and embedded systems. This means AI capabilities can be deployed in real-time applications without needing to communicate with a central server, thus saving bandwidth and reducing latency. Consider the table in @fig-edge-tpu-perf. It shows the performance differences of running different models on CPUs versus a Coral USB accelerator. The Coral USB accelerator is an accessory by Google's Coral AI platform that lets developrs connect Edge TPUs to Linux computers. Running inference on the Edge TPUs was 70 to 100 times faster than on CPUs.

|

||||

|

||||

](images/tflite_edge_tpu_perf.png){#fig-edge-tpu-perf}

|

||||

](images/png/tflite_edge_tpu_perf.png){#fig-edge-tpu-perf}

|

||||

|

||||

**NN Accelerators**: Fixed function neural network accelerators are hardware accelerators designed explicitly for neural network computations. These can be standalone chips or part of a larger system-on-chip (SoC) solution. By optimizing the hardware for the specific operations that neural networks require, such as matrix multiplications and convolutions, NN accelerators can achieve faster inference times and lower power consumption compared to general-purpose CPUs and GPUs. They are especially beneficial in TinyML devices with power or thermal constraints, such as smartwatches, micro-drones, or robotics.

|

||||

|

||||

@@ -98,7 +98,7 @@ There are also several other numerical formats that fall into an exotic calss. A

|

||||

|

||||

By retaining the 8-bit exponent of FP32, BF16 offers a similar range, which is crucial for deep learning tasks where certain operations can result in very large or very small numbers. At the same time, by truncating precision, BF16 allows for reduced memory and computational requirements compared to FP32. BF16 has emerged as a promising middle ground in the landscape of numerical formats for deep learning, providing an efficient and effective alternative to the more traditional FP32 and FP16 formats.

|

||||

|

||||

](https://storage.googleapis.com/gweb-cloudblog-publish/images/Three_floating-point_formats.max-624x261.png){#fig-fp-formats}

|

||||

](https://storage.googleapis.com/gweb-cloudblog-publish/images/png/Three_floating-point_formats.max-624x261.png){#fig-fp-formats}

|

||||

|

||||

**Integer**: These are integer representations using 8, 4, and 2 bits. They are often used during the inference phase of neural networks, where the weights and activations of the model are quantized to these lower precisions. Integer representations are deterministic and offer significant speed and memory advantages over floating-point representations. For many inference tasks, especially on edge devices, the slight loss in accuracy due to quantization is often acceptable given the efficiency gains. An extreme form of integer numerics is for binary neural networks (BNNs), where weights and activations are constrained to one of two values: either +1 or -1.

|

||||

|

||||

|

||||

Reference in New Issue

Block a user